Who Really Wins the AI Policy Race? This Legal-Technologist’s Perspective

Executive Summary

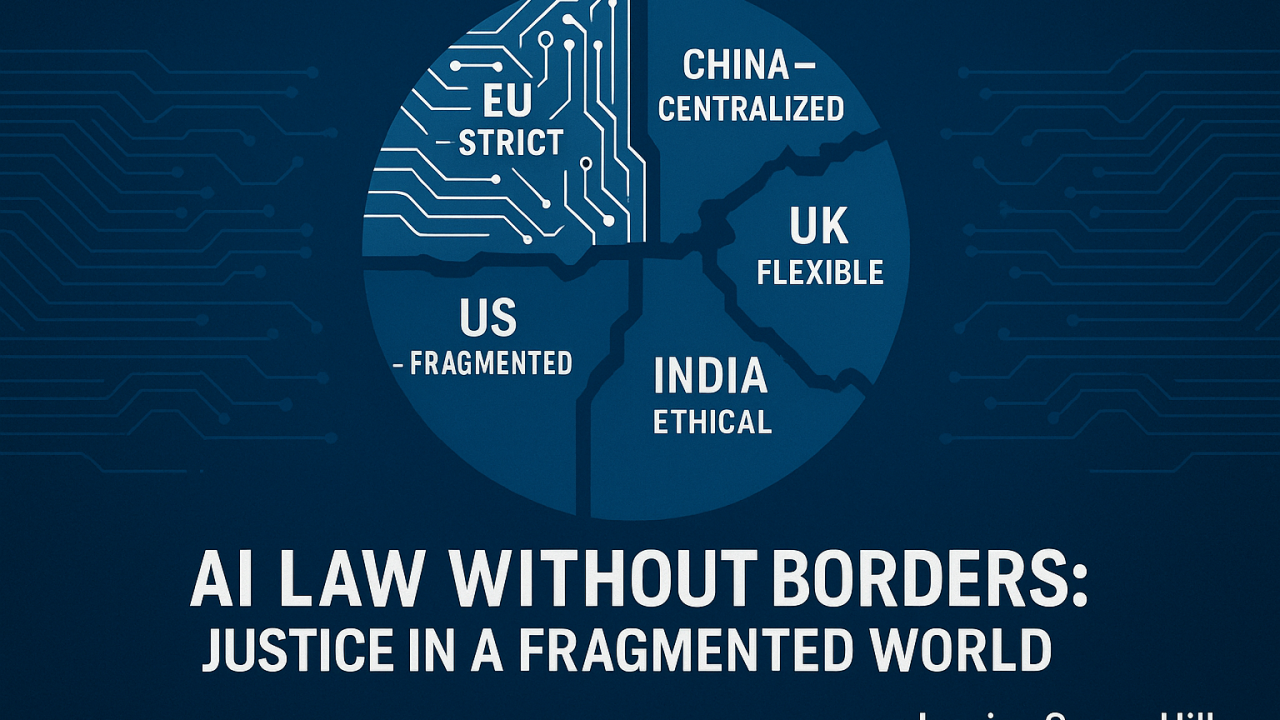

The global conversation about artificial intelligence (AI) regulation often gets framed as a “race” between regions. The EU, US, China, UK, and emerging economies are each drafting rulebooks. Analysts, like Reiner Petzold, describe this moment as a fragmented global competition where every government is running but no one is playing the same sport.

Yet from a legal-tech perspective, the framing of AI policy as a “race” is both incomplete and misleading. It is not only about speed of innovation versus caution of regulation. It is about law, justice, rights, and enforceability. Policies are only as strong as the courts that uphold them, and businesses cannot treat compliance as an optional box-tick.

This article expands the debate: not just who is “winning” AI policy on paper, but whether the world’s legal systems are equipped to govern AI in practice.

I. The Global AI Policy Landscape: A Patchwork Without a Passport

The recent Global State of AI Policies report maps how 36+ countries and regions approach AI. Some key highlights:

- European Union (EU): The most detailed framework, the EU AI Act, is risk-based and enforces strict requirements around fundamental rights. Penalties are high, enforcement is serious.

- United States (US): No single law; instead, a sector-by-sector approach. Healthcare and finance have rules, but elsewhere the patchwork leaves legal uncertainty. The focus is on innovation, not restriction.

- China: Centralized, security-first, and deeply interventionist. Regulations focus on social stability and surveillance while simultaneously pushing for global AI dominance.

- United Kingdom (UK): A flexible, principles-led, “regulate as you go” approach designed to balance innovation and adaptability.

- India: A phased, ethics-driven approach, seeking balance between economic opportunity and responsible AI.

- Others: From Singapore’s trust-based frameworks to Brazil’s EU-inspired risk-based proposals, and Africa’s emerging sandboxes, the variety is enormous.

The common theme: fragmentation. There is no “AI passport.” Companies face a compliance maze where exporting an AI product globally means redesigning for multiple, sometimes contradictory standards.

II. Why Fragmentation is More Than a Policy Problem

Reiner correctly noted that fragmentation is the defining feature of AI governance today. But as a future barrister and AI law strategist, I argue this is not just a regulatory inconvenience — it is a legal time bomb.

- Cross-border disputes are inevitable. Imagine an AI system built in California, deployed in Berlin, challenged in Delhi, and litigated in London. Whose law applies? Which court has jurisdiction? The EU AI Act? The US patchwork? India’s phased guidelines?

- Fragmentation undermines trust. If citizens cannot rely on a predictable legal standard, public confidence erodes. Law, unlike technology, depends on consistency. Justice cannot be relative to geography in a hyperconnected world.

- Fragmentation incentivizes regulatory arbitrage. Companies may choose to operate where oversight is weakest, much like tax havens. This undermines stronger jurisdictions and risks creating “AI havens” where accountability is absent.

III. The Legal Tensions Beneath the Policies

Behind the high-level policy statements are fundamental legal clashes:

1. Data Protection vs. National Security

- EU: GDPR + AI Act → strict consent and data handling rules.

- China: National security trumps individual rights. Data is a state asset.

- US: Privacy rules are fragmented; HIPAA (health), GLBA (finance), but no universal baseline.

This creates contradictions: an AI system lawful in Beijing could be unlawful in Brussels.

2. Transparency vs. Trade Secrets

- Regulators demand explainability.

- But AI developers argue explainability exposes intellectual property and competitive advantage.

- Courts will soon have to weigh “right to explanation” against “right to protect IP.”

3. Risk-Based Categorisation vs. Reality of Enforcement

- Risk levels (EU, Brazil, South Korea) sound logical.

- But enforcement requires resources, trained regulators, and local courts willing to test cases.

- Laws without teeth create false security.

4. Contract Law Meets AI

When an AI tool signs or interprets contracts, what legal weight does that carry? Contract law was never designed for machine agency. Courts will have to reinterpret doctrines like offer, acceptance, and intention in light of AI mediation. Particularly exciting to me.

IV. Courts and Compliance: The Real Stress Test

The effectiveness of AI regulation will be tested not in policy documents but in courtrooms.

Case Scenario 1: Discrimination in Hiring

- AI screening tools flag certain candidates as “low suitability.”

- In the EU: candidate may challenge under anti-discrimination + AI Act provisions.

- In the US: possible EEOC claim, but standards vary by state.

- In China: challenge unlikely, as state interest may override individual claims.

Case Scenario 2: AI in Healthcare

- An AI-enabled device is approved by FDA in the US but denied by regulators in the EU.

- Patient harmed in London: could sue manufacturer under EU law even though the device was compliant in the US.

Case Scenario 3: Cross-Border AI Contract

- AI negotiates a contract between a German and US firm. Dispute arises.

- Which jurisdiction’s definition of “valid consent” applies? EU strictness or US flexibility?

Each scenario shows one truth: AI law will live or die in the courts, not in white papers.

V. Business and Justice Implications

For businesses:

- Compliance ≠ checklists. It requires anticipating litigation.

- Local readiness matters. Laws may be global in intent but local in enforcement.

- Strictest law sets the baseline. Many companies will design for EU compliance first, then scale.

For justice systems:

- Access to justice gap widens. Citizens in strong-regulation countries (EU) can litigate AI harms; citizens in weaker-regulation countries cannot. This risks creating two classes of AI users: protected and unprotected.

- Legal inequality will become a geopolitical issue.

For law firms and legal departments:

- AI regulation is not an abstract policy space — it is the next big litigation wave. Think asbestos, tobacco, GDPR fines. AI will be bigger.

VI. Towards a Global Legal Framework?

Can the world agree on a unified AI legal framework?

- Possibility 1: Treaties. Bodies like the UN or OECD could push for shared principles, like the Paris Agreement for AI. But enforcement is weak.

- Possibility 2: De facto convergence. Companies may follow the strictest regime (EU AI Act), creating global standards by default.

- Possibility 3: Regional blocs. US, EU, China, India each create spheres of legal influence, leaving companies to “pick a bloc.”

The most realistic? De facto convergence. As with GDPR, global firms may treat EU law as the gold standard because it is easier to comply universally than build fragmented compliance systems.

But convergence must not stop at risk categories and transparency checklists. It must embed fundamental rights, access to justice, and due process. Otherwise, AI will deepen inequalities.

VII. Conclusion: From Race to Rule of Law

Reiner asked: Who’s winning the AI policy race? My answer: no one wins a race where the finish line keeps moving.

Instead, the real question is: Will law, rights, and justice keep pace with AI?

The global AI landscape is not just fragmented — it is testing whether our legal systems are strong enough to protect citizens, hold companies accountable, and sustain public trust.

Leaders who succeed will not just build compliant products; they will anticipate litigation, embed rights from the design stage, and navigate courts as confidently as code.

For me, as an aspiring barrister and AI strategist, this is not only a professional challenge — it is a generational responsibility. The law must remain the anchor in a world racing to define the future of intelligence.

#AI #LegalTech #AIRegulation #FutureOfLaw #AccessToJustice #GlobalAI