1. Introduction – Why AI Benchmarking Matters Now

If you work in AI governance, you’ll know that benchmarking rarely makes headlines. It doesn’t generate the hype of a new model release, the political theatre of a major regulatory announcement, or the drama of a high-profile AI failure.

But here’s the thing: benchmarks quietly shape the entire AI ecosystem. They decide what “good” looks like. They drive corporate R&D priorities. They influence investor confidence. And increasingly, they determine whether AI systems can be trusted in law, healthcare, finance, defence, and public administration.

When benchmarks are well-designed, they accelerate safe and beneficial AI adoption. When they’re flawed, they can encourage systems that perform brilliantly on paper but fail catastrophically in the real world.

We’re now entering a new chapter: agentic AI — systems that don’t just produce information, but act on the world. A chatbot that drafts a legal argument is one thing. An AI that autonomously files that argument with a court, negotiates with opposing counsel, or executes contractual obligations is another entirely.

In this context, the European Commission’s Joint Research Centre (JRC) has published a significant paper on the limitations and future of AI benchmarking. It’s a detailed critique — not just of the metrics, but of the underlying political, economic, and cultural forces that shape them.

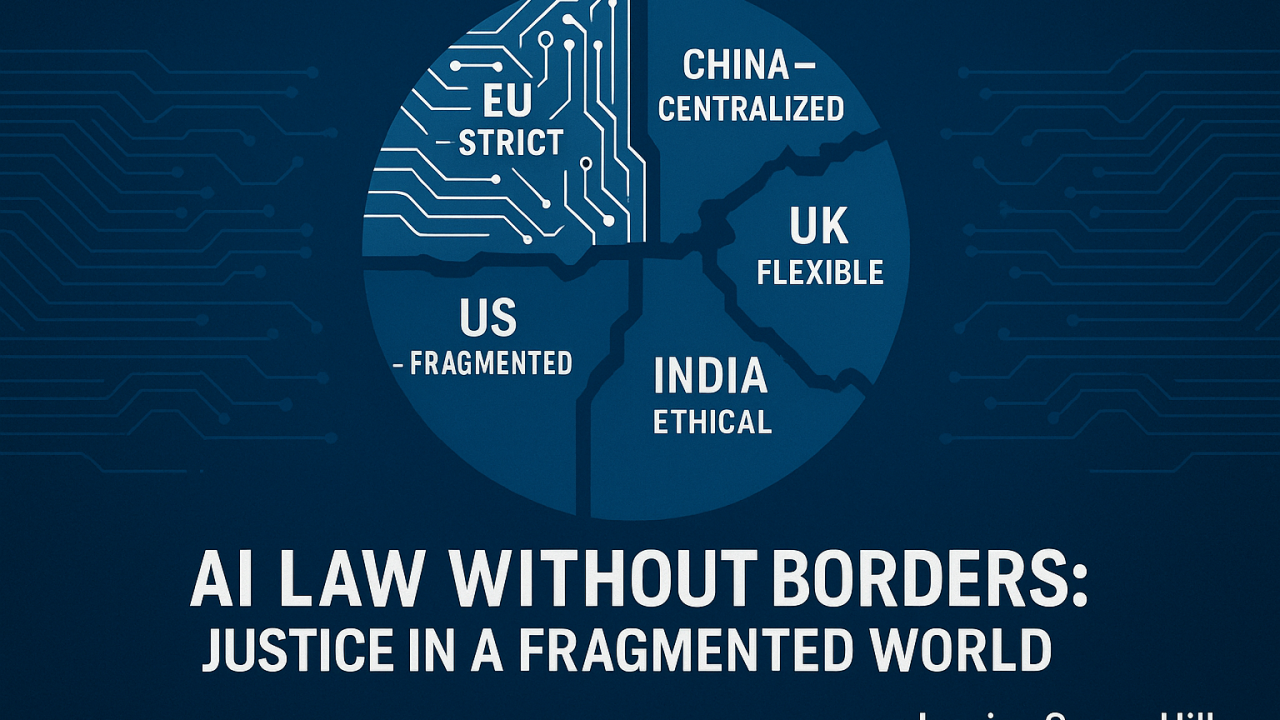

Across the Atlantic, the U.S. government has laid out the America’s AI Action Plan — a document that positions AI leadership as a matter of national security and global economic dominance. Evaluations are part of the picture, but the emphasis is on speed, deregulation, and deployment.

Reading them side-by-side is fascinating. The EU paper says, in effect: “Slow down. Our benchmarks are fragile and need rethinking before agentic AI takes over.” The U.S. plan says: “We’re in a race. Build faster, deploy faster, and we’ll figure out the evaluations as we go.”

As someone working at the intersection of AI, law, and governance, I think both perspectives are right — and both are incomplete. We need the EU’s guardrails and the U.S.’s gas pedal, together.

2. The EU Commission’s View – Benchmarks as Political, Fragile, and in Need of Reform

The JRC paper is based on a meta-review of around 110 publications over the past decade, focusing on critical analyses of AI benchmarking.

Their main thesis? Benchmarks are not neutral scientific tools. They are “deeply political, performative, and generative” — meaning they don’t just measure AI systems, they shape what gets built in the first place.

2.1 Nine Categories of Benchmarking Problems

The paper identifies nine interlinked problem areas:

- Data Collection, Annotation, and Documentation – Poor documentation, reused datasets with unclear origins, and ethical concerns over privacy, consent, and bias. Benchmarks often rely on noisy, culturally biased, or ethically questionable data.

- Construct Validity – Many benchmarks don’t measure what they claim to measure. Terms like “fairness” or “safety” are poorly defined, and benchmarks become proxies for real-world capability without adequate justification.

- Sociocultural Context Gap – Benchmarks are normative instruments that embed certain values, often privileging efficiency over care, universality over context, and neutrality over positionality.

- Narrow Diversity and Scope – A heavy focus on text-based benchmarks, neglecting multimodal and real-world interactions. Safety and ethics benchmarks are underdeveloped.

- Economic and Competitive Roots – Benchmarks can serve as corporate marketing tools, fuelling hype and “SOTA-chasing” (state-of-the-art chasing) rather than genuine safety or capability improvements.

- Rigging and Gaming – Goodhart’s Law in action: when a measure becomes a target, it ceases to be a good measure. Benchmarks can be gamed through overfitting, data contamination, or sandbagging.

- Dubious Community Vetting – Certain benchmarks become dominant due to citation culture and inertia, not because they are truly fit for purpose.

- Benchmark Saturation – Rapid AI progress means many benchmarks are outdated almost as soon as they’re created.

- Complexity and Unknown Unknowns – AI systems can fail in ways benchmarks don’t anticipate. Safety fine-tuning can introduce new vulnerabilities.

2.2 The Agentic AI Challenge

The paper draws a key distinction:

- Passive AI – Systems that generate text or images without acting on the world.

- Agentic AI – Systems that act according to an objective function, affecting their environment directly.

This matters because agentic AI brings new risk dimensions:

- Autonomy – What if an AI agent’s decisions harm a consumer in a transaction?

- Principal–Agent Misalignment – What if the AI’s objective aligns more with the provider’s profit than the user’s interest?

- Value Balancing – How do we weigh individual user benefit against societal welfare?

The JRC’s recommendation: import concepts from agency law into AI evaluation. Just as human agents have fiduciary duties to their principals, AI agents should be benchmarked on whether they act in their principal’s interest, respect authority, and avoid harm.

2.3 My Take as a Legaltech Professional

From a legal governance standpoint, this is a smart move. Agency law already has a rich set of doctrines for situations where one party acts on behalf of another but has discretion that could be abused. Bringing this into AI benchmarking could give us legally meaningful evaluation criteria — benchmarks that regulators and courts can interpret, not just engineers.

3. The US AI Action Plan – Evaluations in a Race for Dominance

The U.S. AI Action Plan, published in July 2025, is a very different kind of document. It’s not an academic review; it’s a strategic roadmap for achieving “unquestioned and unchallenged global technological dominance”.

3.1 Three Pillars

- Accelerate AI Innovation – Deregulation, support for open-source models, AI adoption in industry and government, AI-enabled science, and workforce development.

- Build American AI Infrastructure – Data centers, semiconductor manufacturing, energy grid expansion, secure compute for government, and critical infrastructure protection.

- Lead in International AI Diplomacy and Security – Export American AI to allies, counter Chinese influence in governance bodies, tighten export controls, and evaluate frontier models for national security risks.

3.2 Where Evaluations Fit In

Benchmarks and evaluations are not the star of this document, but they are there — framed in pragmatic, applied terms:

- AI testbeds in secure, real-world settings for regulated industries like healthcare and agriculture.

- Agency-specific evaluation guidelines through NIST for mission-specific AI uses.

- Twice-yearly interagency meetings to share evaluation best practices.

- National security evaluations of frontier models for risks like cyberattacks or biosecurity threats.

3.3 The Tone Shift

Where the EU paper is reflective and cautionary, the U.S. plan is forward-leaning and competitive. The emphasis is on speed — removing “onerous regulation” and “red tape” — while trusting that evaluation science can develop in parallel with deployment.

4. Comparative Analysis – Convergence and Divergence

4.1 Shared Ground

- Both recognise the importance of trustworthy AI evaluations.

- Both want AI to be safe, aligned, and beneficial in high-stakes contexts.

- Both see evaluations as part of a larger governance framework.

4.2 EU’s Edge

- Deep socio-technical analysis of benchmarking weaknesses.

- Willingness to treat benchmarks as political artefacts that need democratic oversight.

- Proposal to integrate legal concepts like agency law.

4.3 US’s Edge

- Real-world testbeds that simulate deployment conditions.

- Integration of evaluations into sector-specific regulatory and operational contexts.

- Strong linkage between evaluation capacity and industrial/national security strategy.

4.4 Risks in Isolation

- EU risk: Over-bureaucratisation, slowing innovation, making it harder for SMEs to compete.

- US risk: Deploying systems faster than we can fully understand or trust them.

5. The Legal Dimension – Agency Law as a Bridge

Agency law governs the relationship between a principal (e.g., a client) and an agent (e.g., a lawyer) who is authorised to act on the principal’s behalf. Key duties include:

- Duty of loyalty.

- Duty to follow instructions.

- Duty to act with care and competence.

Applying this to AI means asking:

- Does the AI act in the principal’s interest, even if it conflicts with the provider’s profit motive?

- Does it recognise and respect the principal’s authority?

- Does it balance individual benefit with broader societal welfare when those interests conflict?

A transatlantic “agency benchmark” could be a powerful common ground — grounded in centuries of legal precedent, adaptable to different jurisdictions, and relevant across sectors.

6. Lessons for Legaltech and High-Stakes AI

For legaltech providers and buyers, these documents point to some practical evaluation criteria:

- Don’t just ask for accuracy scores. Ask how the benchmark data was collected, documented, and validated.

- Look for multi-modal, real-world test results, not just lab metrics.

- Ask whether the system has been evaluated for principal–agent alignment.

- Demand transparency on known failure modes, not just success rates.

7. Recommendations – Toward a Transatlantic Benchmarking Alliance

- Joint EU–US working group on “trustworthy benchmarks” that reward performance without inviting gaming.

- Common principles for benchmark trustworthiness: transparency, diversity, real-world relevance, and legal accountability.

- Mutual recognition of benchmark results where standards align.

8. Conclusion

The EU is building the guardrails. The US is flooring the accelerator. If we only have one, we’re in trouble. If we can combine both, we have a chance to steer AI toward a future that is both innovative and safe.

#AI #ArtificialIntelligence #AIBenchmarking #AIRegulation #AITrust #AgenticAI #LegalTech #AIGovernance #EthicalAI #AIPolicy #EUAI #USAI #AIStandards #AITransparency #ResponsibleAI #AITesting #AIAlignment #AISafety #AIInnovation #TransatlanticAI